When Veeam Backup & Replication version 12 releases, it will give the Veeam community several long-requested features. One of these will be direct backup to object storage. In previous releases, backup to object was possible, but only through Move/Copy functionality built into Scale-Out Backup Repositories, meaning additional on-premises storage was required. Now that it will no longer be required, users should be able to expect some storage savings and a reduction in complexity and scalability for environments that typically see significant data footprint increases.

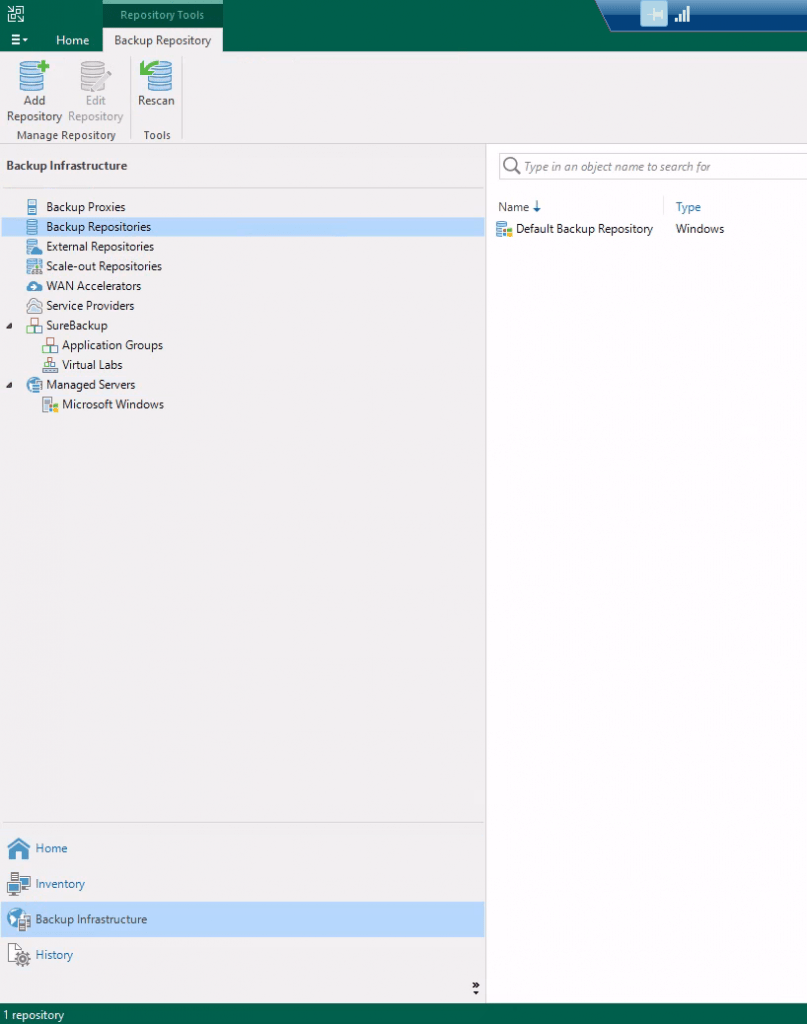

Adding an S3-compatible repository is a fairly simple process. First, launch the Veeam Backup & Replication console. Using the bottom left navigation menu, select “Backup Infrastructure”. Then, select “Backup Repositories” from the left-hand menu.

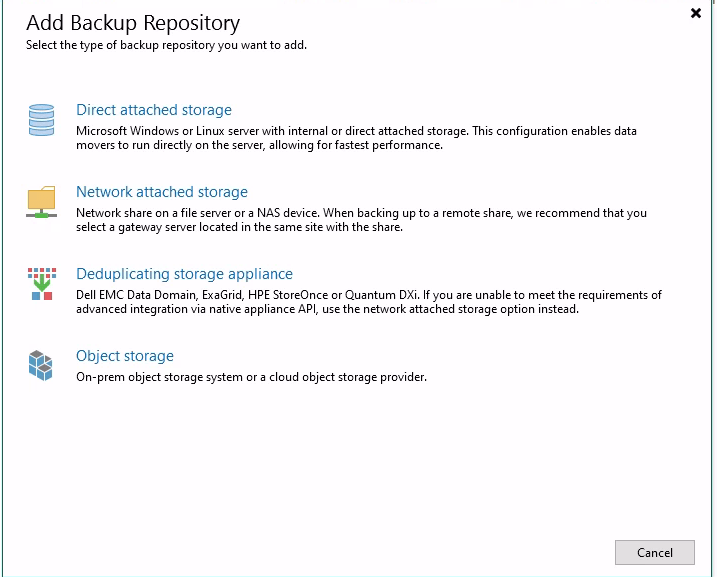

Then, click “Add Repository” in the top menu. In the newly opened window, select “Object Storage”.

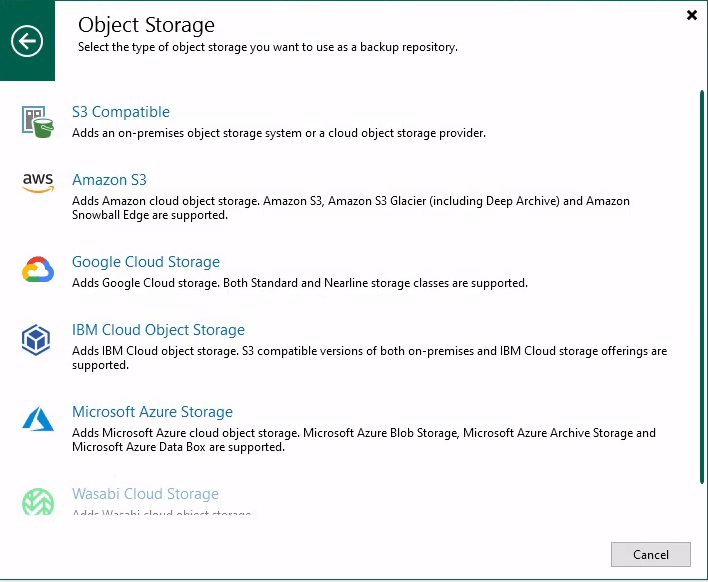

Select the object storage type. In this case, I will be connecting to S3-compatible storage hosted by a Ceph server in my lab, so I’ll select “S3 Compatible”.

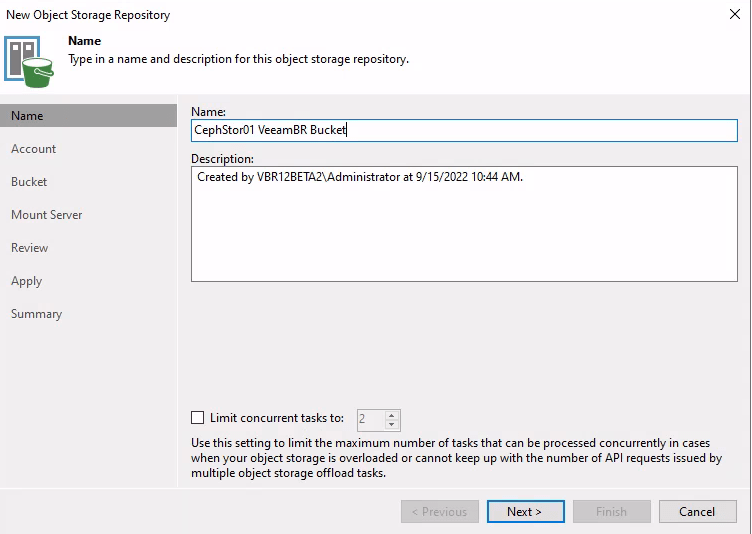

Next, give the repository a name and description. If you’d like to limit the number of concurrent tasks on the repository, you can do so here as well.

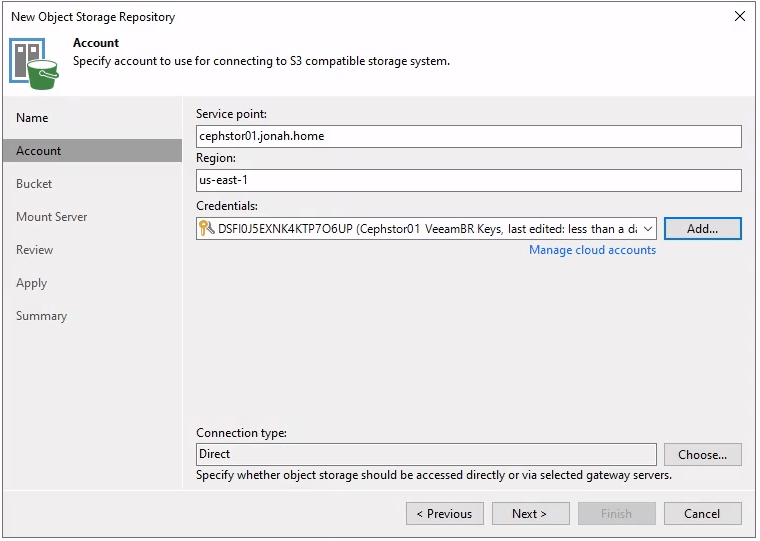

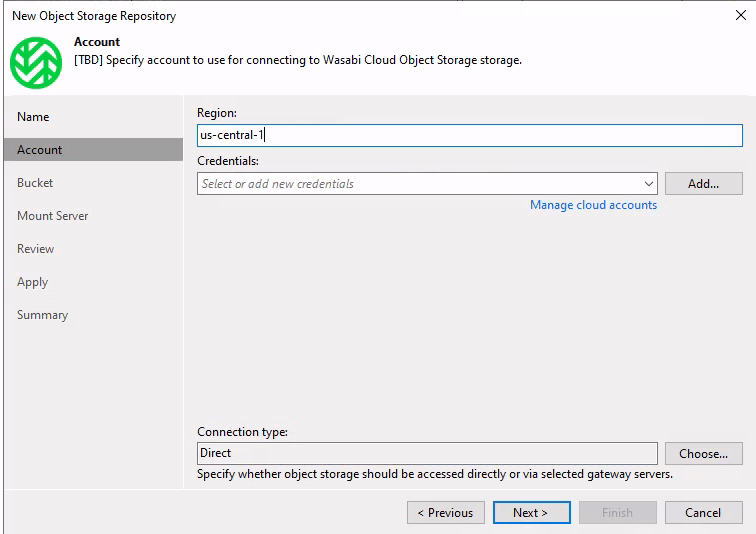

Next, you’ll specify the service point, region, and access credentials. You can also specify a gateway server to route traffic through.

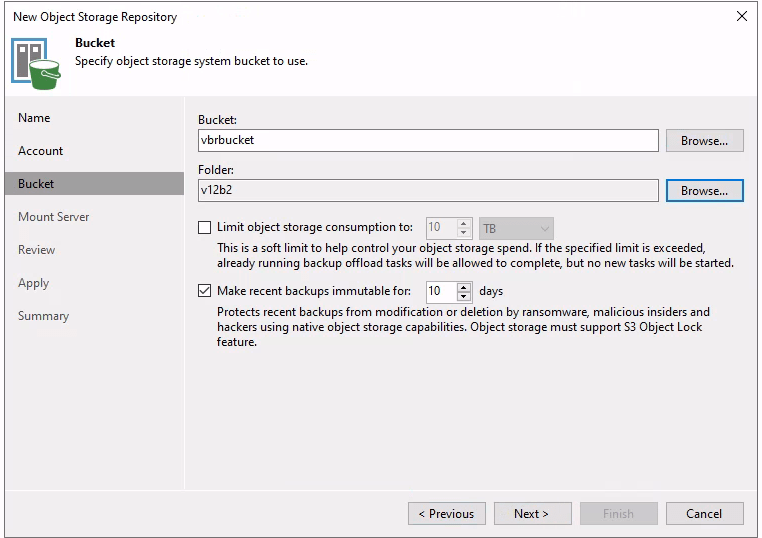

Next, specify the bucket and folder that backups should write to. If you wish to set a limit on consumption or set an immutability period, you can do so here as well. Just like with Capacity and Archive Tier in a Scale-Out Repository, immutability requires the bucket to have S3 Object Lock enabled.

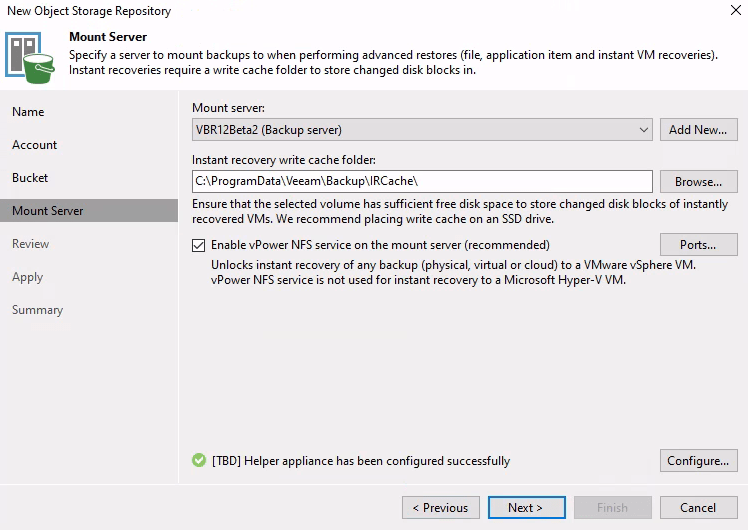

On the next screen, the mount server and write cache folders are specified, just like with other repositories.

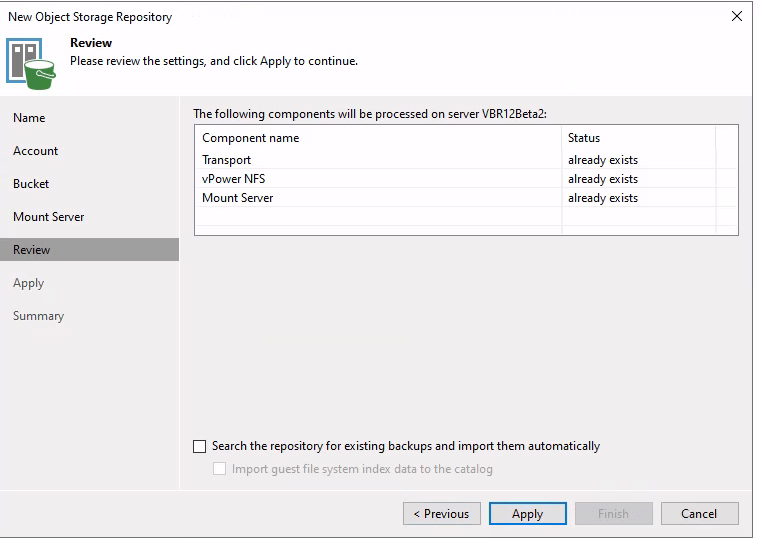

Finally, there is a screen to review settings. If backups already exist on the repository being added, they can be imported at this time.

After clicking Apply, the repository will be created and added to the configuration database. Once this has been completed, the repository can be used as a target for backups, either as a standalone repository or as a Scale-Out Backup Repository extent. In my case, I want to mirror the backups and send anything older than 14 days to Wasabi so I have them offsite, so I’ll be creating a Scale-Out repository. With version 12, I could alternatively create a backup copy job pointed to a standalone Wasabi repository. This is made easier by Wasabi being added as a repository location, so I no longer have to input the service URL like in previous versions.

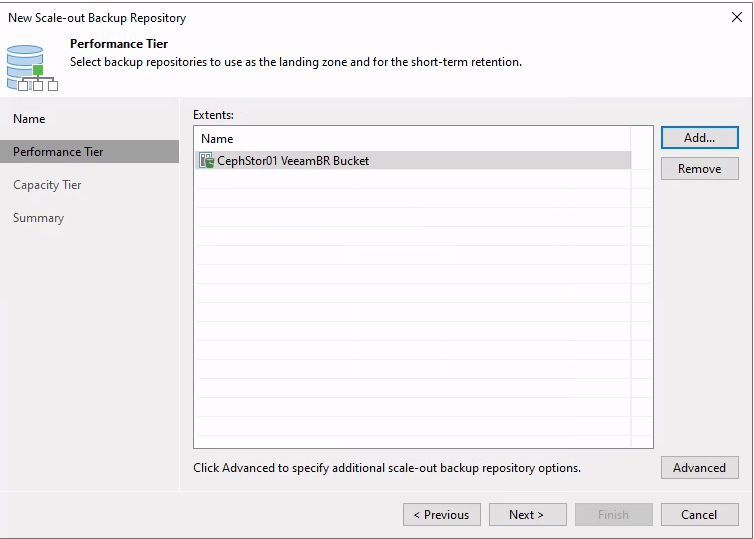

The process for creating a Scale-Out Backup Repository has remained mostly unchanged. First, I select the name and description of the SOBR, before selecting my performance tier extents. In this case, I chose the newly created repository.

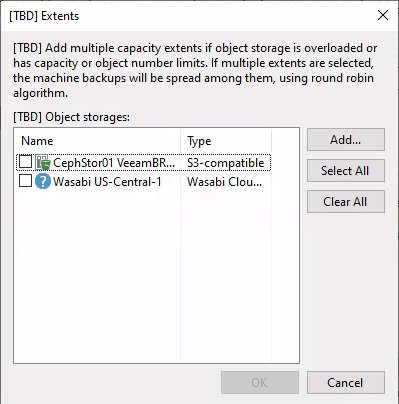

It seems that specifying between the data locality and performance placement policies has been retired, though this could be due to the fact that I am using a beta version of the software. Next, I configure the capacity tier. There is a change here. Namely, I can select multiple capacity tier extents just like what has been possible with the performance tier for years.

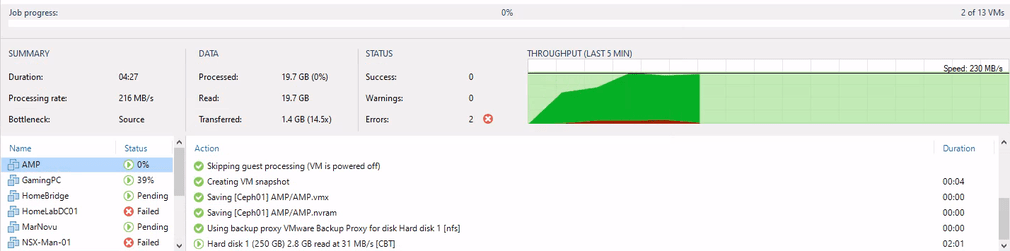

The rest of the process remains unchanged. Once the SOBR is created, I can create a backup job targeting the SOBR and start it. After creating and starting the backup job, I am seeing pretty good speeds, especially considering the Ceph cluster is only 6 disks and is not only the S3 target but also hosting the VMware datastore the VMs are located on.